May 13, 2026

Top 7 Labelbox Alternatives for Computer Vision Teams (2026 Buyer's Guide)

Labelbox has been a defining platform in the data annotation category for years. It's the tool most enterprise ML teams have used or at least evaluated. It's capable, mature, and backed by solid engineering.

It's also not the right fit for a growing number of teams — particularly those focused specifically on computer vision, those where pricing becomes painful at scale, and those that have outgrown the 'annotation platform plus ten other tools' stitched stack.

If you're evaluating alternatives to Labelbox, here are seven worth serious consideration. We'll cover what each platform does well, where it falls short, and who should actually shortlist it.

Full disclosure: Intellabel is on this list. We'll be explicit about where we win, where we don't, and where other platforms will serve you better. A buyer's guide that claims its own product is always the best is a sales document, not a guide.

Why Teams Look at Labelbox Alternatives

Before the list, it's worth being precise about why teams leave Labelbox or choose not to start there. The reasons cluster into five patterns:

Pricing at scale. Labelbox's pricing model has a reputation for scaling aggressively past certain volume thresholds. Teams often find that what was affordable at 100,000 labels a year becomes a line item that requires CFO sign-off at 5M labels a year.

MLOps gap. Labelbox is excellent at annotation and dataset operations. It's not trying to be a training platform, a model registry, or a production monitoring system. If you're tired of stitching those together, you're looking for something different.

CV-specific depth. Labelbox is multimodal. If your data is 95% images and video, some of what you're paying for — document annotation, text-and-LLM workflows — is breadth you don't need.

Medical or specialized domain fit. DICOM workflows, pathology imaging, and specific regulated domains have better-suited alternatives.

Self-hosting or data residency. Some enterprises have strict data residency requirements or security policies that favor self-hosted deployments. Labelbox's story here is improving but remains more constrained than several alternatives.

1. Intellabel

Positioning: A unified AI Data Operations platform built specifically for computer vision, covering annotation through MLOps in a single connected workflow.

Strengths: End-to-end coverage from raw image to production model — annotation with AI-assisted pre-labels, dataset versioning with full lineage, multi-stage QA with audit trails, native training pipelines, model registry with promotion controls, drift monitoring. No integration seams. Transparent pricing with published tiers. Enterprise-grade security (SSO, RBAC, encryption, ISO-aligned controls). Active learning integrated rather than bolted on.

Honest weaknesses: CV-focused — if your data includes significant text, audio, or LLM components, Intellabel is not the right choice. Newer than Labelbox, so ecosystem of third-party integrations is more limited. Medical imaging support is solid but Encord has more specialized depth in that vertical specifically.

Best fit: CV teams that are tired of stitching 4-6 tools together and want the full data-to-model loop in one platform, with proper governance and pricing that doesn't punish growth.

2. Encord

Positioning: A multimodal AI data platform with particularly strong medical imaging and active learning capabilities.

Strengths: Deep DICOM and NIfTI support for medical imaging. Multimodal handling of images, video, audio, text, and documents. Active learning and data curation are genuinely first-class features rather than afterthoughts. Strong model evaluation capabilities. HIPAA and SOC 2 posture.

Honest weaknesses: MLOps integration is lighter than it appears — training, model registry, and production monitoring typically happen elsewhere. Learning curve is steeper than most teams expect. Pricing requires custom quotes.

Best fit: Medical imaging teams, multimodal AI teams, and organizations that prioritize active learning as a core workflow.

3. SuperAnnotate

Positioning: An annotation-first platform with strong workforce management and team velocity features.

Strengths: Polished UI for complex polygon and mask work. Strong dashboards for throughput and quality tracking. Optional managed workforce available through their marketplace, which can be genuinely useful for teams that want a hybrid in-house-plus-external model. Good collaboration features.

Honest weaknesses: Like Labelbox, MLOps is outside their scope. Scaling can be uneven across data types — text and audio workflows feel less polished than image and video. Pricing is negotiated, which makes budget planning harder.

Best fit: Teams that need strong annotation throughput and are comfortable with an annotation-platform-plus-other-tools architecture, particularly those who want workforce flexibility.

4. CVAT

Positioning: Open-source annotation tool, widely used by research and engineering-led teams.

Strengths: Free. Fully open source. Self-hostable. Strong image and video annotation capabilities with support for standard formats (COCO, YOLO, Pascal VOC). Plugin ecosystem for model-assisted labeling. Huge community.

Honest weaknesses: You're the platform team. Uptime, scaling, QA, governance, audit trails, and training integration are all your problem. Starts to feel single-user past about 10,000 frames. No model registry, no experiment tracking, no production monitoring. Enterprise compliance is something you build yourself.

Best fit: Research projects, early prototypes, and organizations with strong DevOps capacity that value maximum control.

5. Roboflow

Positioning: A computer vision-focused platform emphasizing dataset operations, preprocessing, augmentation, and quick model training.

Strengths: Fast onboarding — you can be labeling and training within an afternoon. Strong dataset ops and augmentation tooling. Good export formats and model deployment options for common architectures. Active community and educational content.

Honest weaknesses: Governance and enterprise controls are lighter than enterprise-focused platforms. QA workflow is simpler — works well for smaller teams, creaks at scale. Advanced MLOps features (model registry, drift monitoring, staged deployment) are not as deep.

Best fit: Startups and small teams that want to move fast, or larger teams prototyping a specific model before committing to infrastructure.

6. V7 Darwin

Positioning: High-speed annotation platform focused on computer vision, particularly strong for complex segmentation.

Strengths: Very fast auto-annotation and keyboard-efficient editor. Great for tasks with lots of segmentation or dense polygon work. Strong video annotation. Focused on CV without multimodal breadth dilution.

Honest weaknesses: Less depth on dataset versioning, governance, and MLOps than dedicated data ops platforms. Pricing tilts premium at scale. Limited self-hosting options.

Best fit: Teams doing heavy segmentation work who will pay for speed, particularly in medical or biological imaging where polygon density is high.

7. Scale AI

Positioning: Service-first provider combining platform with a managed global annotation workforce, particularly strong in autonomous vehicles and GenAI training data.

Strengths: Large managed workforce at scale. Strong track record with large-volume enterprise deals. Established in safety-critical verticals. Good for teams that want to fully outsource annotation.

Honest weaknesses: Less platform-centric than the others on this list — if you want to bring your own annotators or hybrid with external, the fit is less natural. Pricing is aggressively enterprise. Platform features for teams who do their own labeling are less comprehensive.

Best fit: Large enterprises, particularly in AV/AD or specialized GenAI data collection, that prefer a service-first model over a self-serve platform.

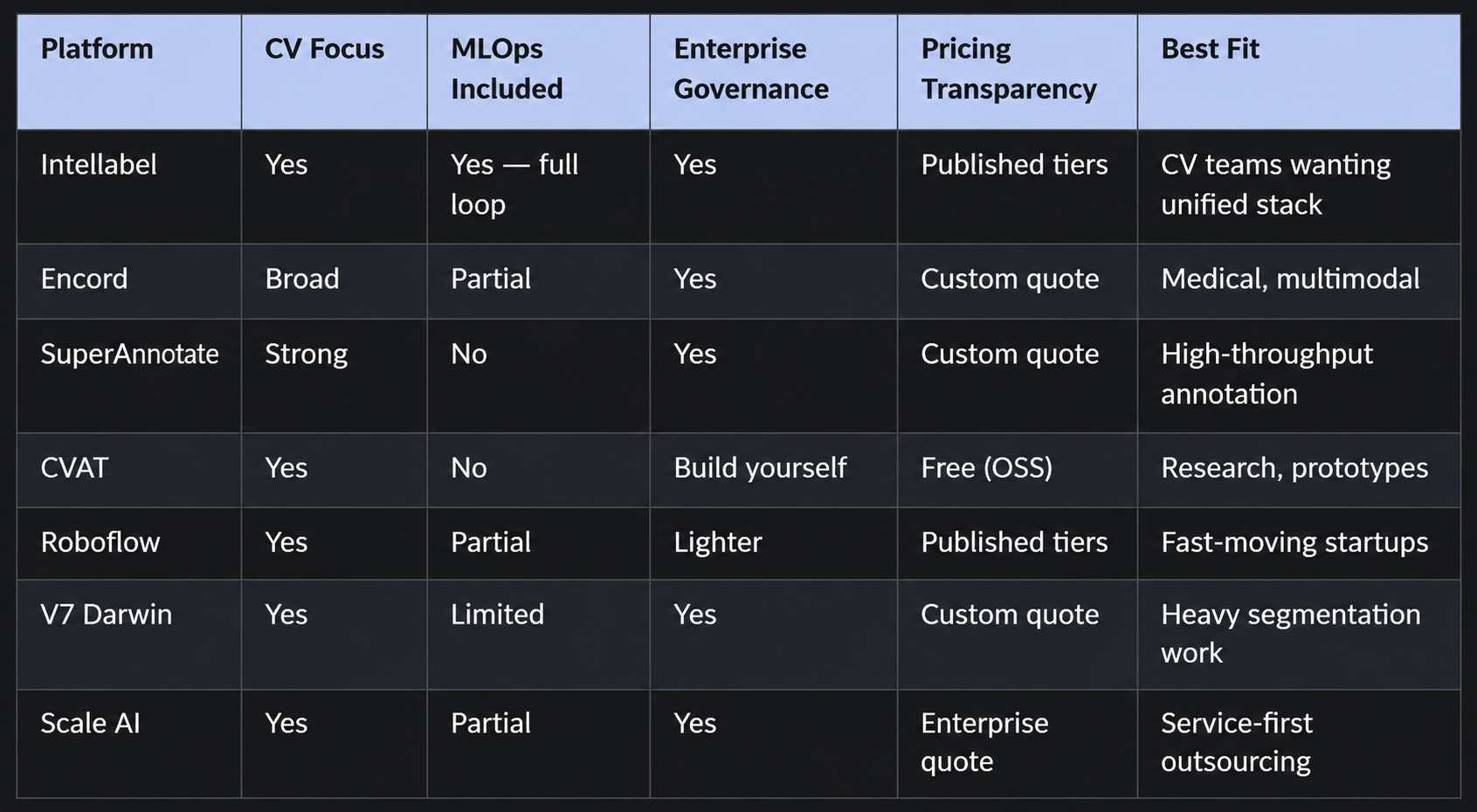

Quick-Reference Comparison

The table below is for shortlisting, not for final decisions. Always run a real pilot.

How to Actually Pick

After reading twenty comparison articles, here's the framework that works.

Step 1: Eliminate on hard constraints. If you need self-hosting and a platform doesn't offer it, cross it off. If you need DICOM support and a platform has it weakly, cross it off. Hard constraints are deal-breakers — respect them.

Step 2: Shortlist to two or three platforms that fit the constraints.

Step 3: Run a real pilot on each. Load 1,000 representative images from your actual data. Configure your real taxonomy. Run the QA workflow you actually want. Export to your real training pipeline. Time it all.

Step 4: Get total-cost quotes from each, at your projected annual volume, with all features you need. Compare apples to apples.

Step 5: Check references. Not logo slides — actual customer calls with someone using the platform for a use case like yours.

A platform decision made on vendor slides is a platform decision you'll regret. A platform decision made on a real pilot and real references is one that holds up.

If Intellabel makes your shortlist, we're happy to run the pilot on your data, at no cost, with our engineering team on the call to answer real questions. Book a demo and we'll set it up.

.png)